A multichannel lidar scans its environment with billions of photons per second, and, if rotating, scans 360 degrees to completely see the surrounding environment. It highlights objects and makes them recognizable, and it detects movement, on whatever platform you mount it to – so lidar can manage object recognition of both non-stationery and stationery objects. Furthermore, as its own light source, lidar can even see at night. In comparison to radar, lidar gives a much greater spatial resolution.

Advantage over camera is also clear.

Cameras depict the world usually in 2D. They can also fall short in dealing with critical light conditions, they lack the capability for instant object measurement, and require much more computer power for advanced evaluations.

All of this makes lidar sensors so ideally suited to contribute to the automation of any kind of vehicle, especially of course AGV’s, cars and commercial vehicles.

But there’s more to it. While the sensors have reached a high level of maturity over time and the prices have becoming attractive it is also worth considering the extremely valuable contribution that this technology can make so that the general traffic in our cities become sustainably safer. In the following let’s look at how lidar can help to monitor the flow of traffic at city intersections and provides analysis functions to make the traffic space safer.

Lidar sensors can create a real-time 3D map of roads and intersections, providing precise traffic monitoring and analytics. The key is to apply deep learning to transform raw lidar data into actionable road usage and safety information. From turning movement counts to analyzing near misses between vehicles, pedestrians, and cyclists, a lidar based monitoring system reliably detects all road users in any weather or lighting condition.

The benefits of using lidar together with deep learning are obvious. It delivers multimodality because it detects and classifies daylight and importantly all road users, including vehicles, pedestrians, and cyclists, while incumbent systems (like radar & inductive loop detectors) will detect vehicles only.

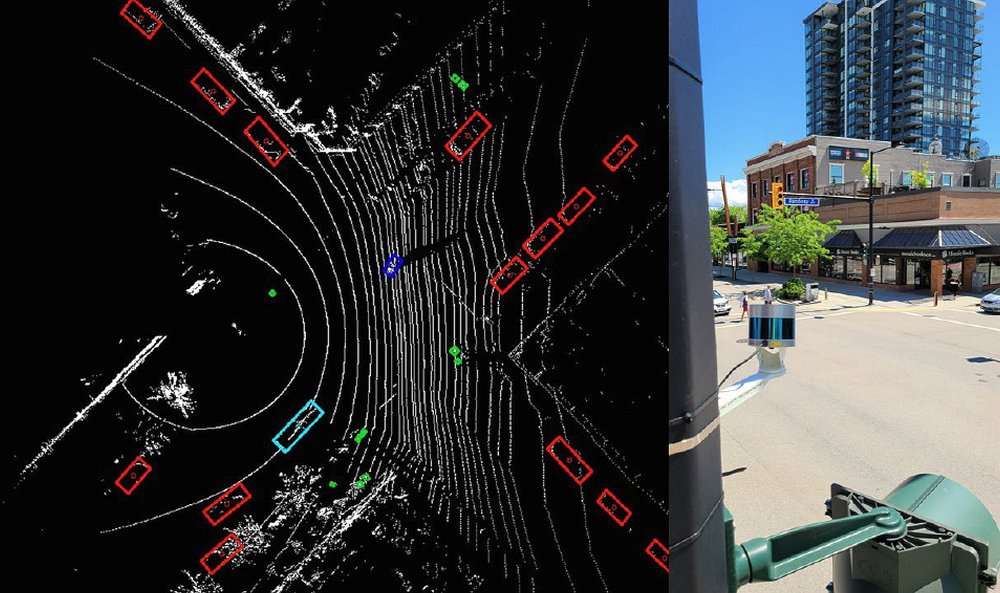

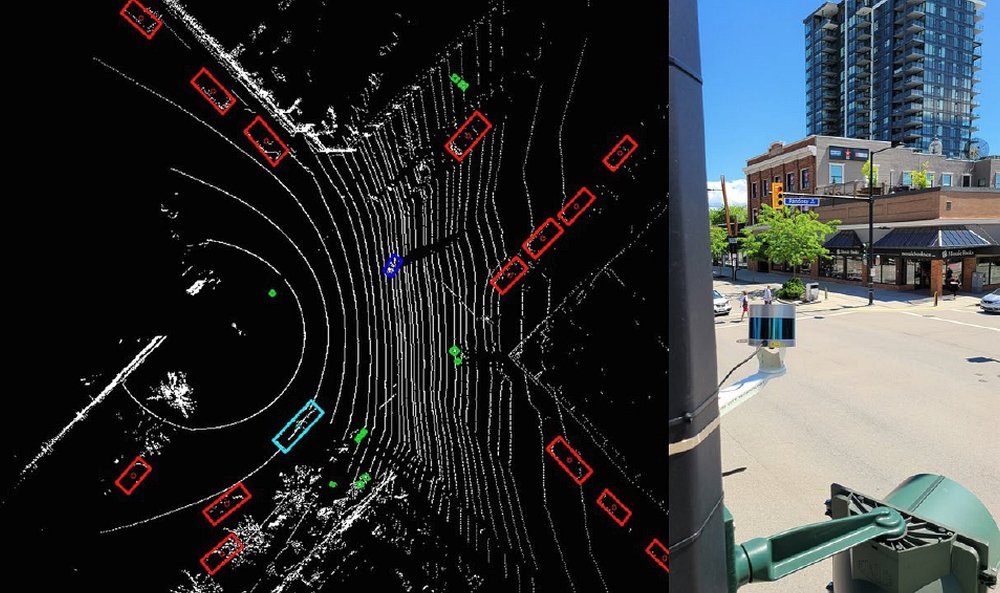

Fig.1 shows a 3D lidar representation of an intersection in Toronto (Yonge/Finch) generated by a lidar (left) along with a sample installation (right).

A major benefit of lidar technology is the raw sensor data output as seen in Fig. 1 which can be reliably processed on-site. All that is required is an appropriate AI-perception software that detects and classifies all the objects within each frame, and assigns unique IDs, with their x and y coordinates being recorded along with their speed, direction, length, and width.

Fig.2 provides a birds-eye-view of the lidar frame along with the output by the perception software (Lidar is mounted at 4.5m height at Pandosy/Bernard intersection in Kelowna). As seen in the picture, each object is detected with a bounding box around it with a specific color code for each class of object, green: pedestrian, red: passenger vehicle, blue: cyclists. Unlike radar-based solutions, this technology precisely detects and classifies road users whether they are stationery or moving and, importantly, measures the absolute distance to the objects.

Fig. 2 – A birds-eye-view of an intersection with perception layer (left), installed sensor in Kelowna, BC (right)

The output of the latter system approach can now be passed into different layers of applications, including traffic actuation, or advanced signal performance measures, and for example surrogate safety analysis.

Actuation Application:

Actuation applications can be realized by implementing virtual loops. Think about custom-shaped virtual loops that can be pre-defined by a system user. This provides the flexibility of having virtual loops defined for a specific type of road user, for example providing priority to a specific type of road user in a shared lane.

Fig. 3 shows a sample virtual loop definition at an intersection. Blue and green color virtual loops were defined for vehicles and pedestrians, respectively. As shown in the picture, virtual loops #6 and #10 have been activated because of the vehicles detected by the system. Virtual loop #3 has been activated because of the presence of pedestrians on crosswalks.

A capability in detecting distinct types of road users, especially vulnerable road users, will help improve the safety of road users. For example, a traffic controller can extend the red light for vehicles to ensure pedestrians cross intersections safely.

Fig. 3 – Virtual Loop Definition

Automated Traffic Signal Performance Measures

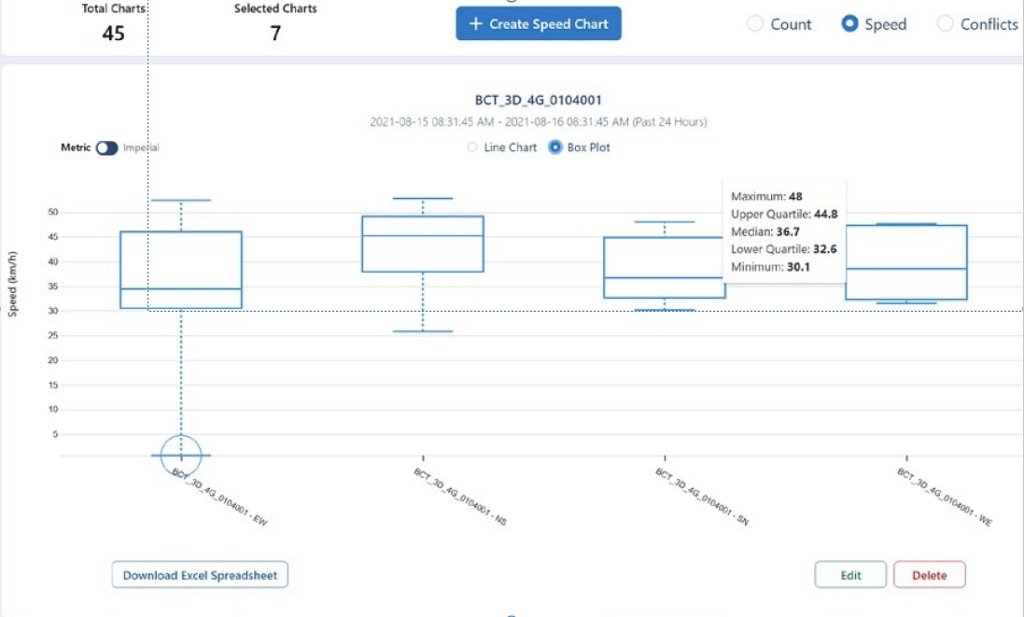

A lidar based system approach also enables the collection of aggregated/disaggregated traffic data. Fig. 4 shows two evaluation examples highlighting count data per distinct types of road users and turning movement (left) and some statistics on speed data per approach.

Fig. 4 – Evaluation example form intersection monitoring: count data [er turning movement (left), speed analysis (right)

Based on the continuous lidar monitoring a variety of further traffic metrics can be derived, based on the classification of road-users (pedestrians, cyclists, passenger vehicles, buses, trucks), such as intersection saturation flow rate, or gap data, occupancy ratio, signal efficiency, etc.

Surrogate Safety Analysis

In addition to the measurements and analysis described above, lidar-based intersection / road monitoring can make an even greater contribution to increasing safety in the traffic area.

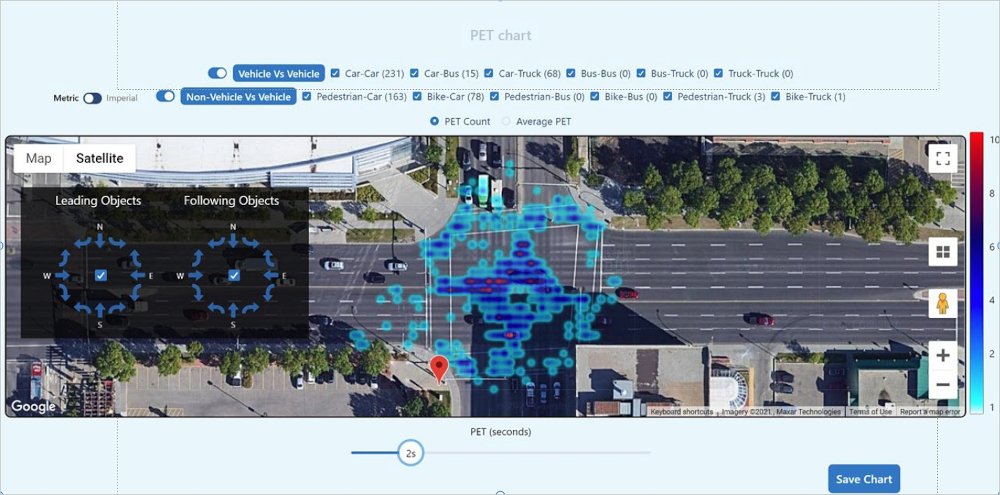

A lidar based monitoring approach provides real-time access to intersection safety measures known as surrogate safety analysis. Trajectory data of the road users can be analyzed to identify safety issues, including near-miss at an intersection. In such a case, each pair of objects with a conflict, a Post Encroachment Time (PET) is calculated as the time between the moment the first road user leaves the conflict point and the moment that the second reaches the same point. Typically PETs less than 2 seconds are considered as critical conflict at intersections.

All detected conflicts can be stored based on their timestamp, geo-location, type of road users involved in a conflict, and their turning movement. Based on that one can visualize those safety metrics on a map. Fig.6 shows a snapshot of a heatmap generated over a week with data provided by a lidar sensor in Edmonton, Canada. This example filters out conflicts with PETs over a threshold, in this snapshot, over 2 seconds. The great advantage of such heatmaps is they can be dynamically adjusted to show conflicts between specific types of road users, as an example of just keeping conflicts between pedestrians and passenger vehicles, so a user can analyze the intersection safety per type of road user and turning movement.

Fig.5 – A sample heatmap generated based on a week of conflict data

Fig.6 shows a snapshot summarizing conflict’s data and the lidar video sample for a conflict between pedestrians and vehicles.

Fig.6 – A sample disaggregated conflict data along with lidar recording highlighting conflict location

Conclusion:

There is no getting around traffic counts to optimize traffic planning. Automated traffic counting is widespread on motorways today. Numerous contact loops, camera systems and radar sensors are permanently installed in order to analyze the traffic separately for heavy-duty and car traffic in real time. Automatic traffic counting is already being used in some in inner-city areas. But depending on the sensor, only data on the number of road users is available. Restrictions also result from poor lighting and climate conditions (with cameras), information on cyclists or pedestrians is often completely missing (radar).

This is how powerful traffic analysis tools can be created using 3D lidar sensors, which go far beyond traffic counts, providing reliable traffic data with enhanced metrics, such as real-time incident detection, warning messages, real-time accident prediction, hotspot identification and more. Unlike camera-based systems, lidar technology always respects the privacy of citizens. Approaches like this may help us realize a world where traffic accidents are rare, driving is a positive experience and carbon emissions are reduced.

All pictures by courtesy of Bluecity.AI and Velodyne Lidar