Automotive LiDAR sensors

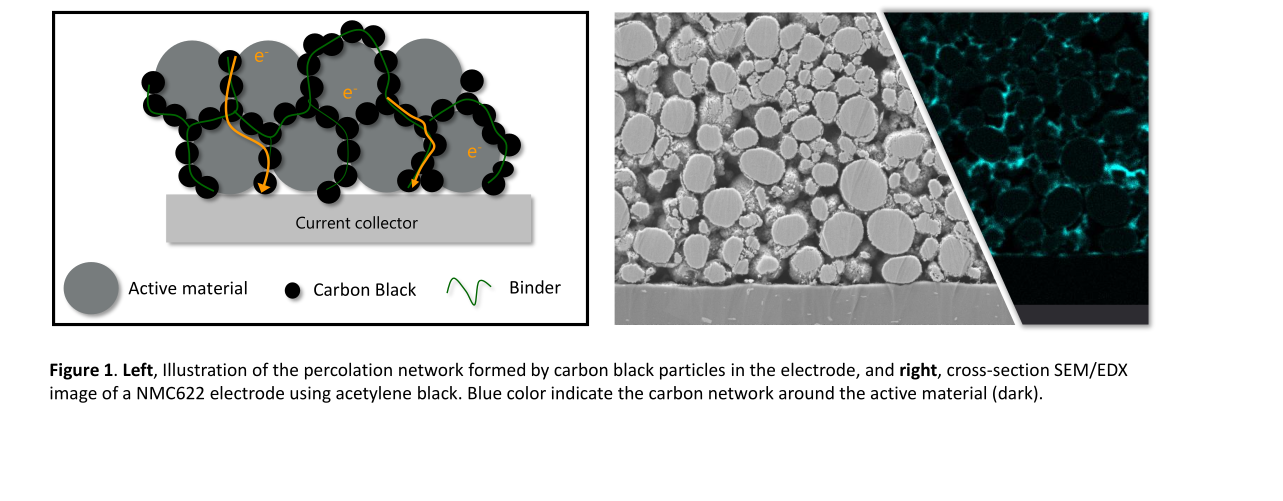

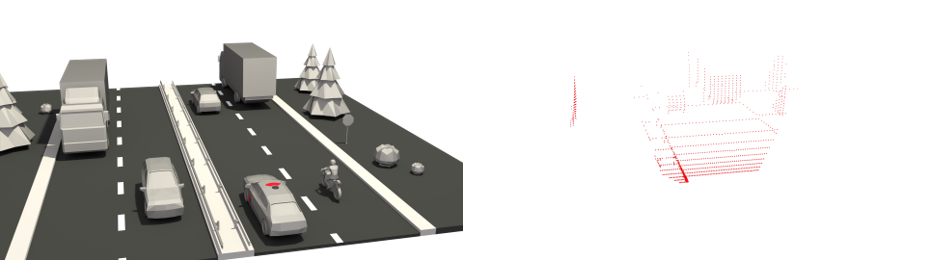

On the way to fully autonomous cars, LiDAR sensors are seen by many as the enabling technology. These sensors are capable of providing detailed three dimensional depth maps of the cars surroundings (figure 1), by utilizing their inherent capability to directly measure distances. To be more precise: It is the lightʼs round trip

Fig. 1: Traffic scene and point cloud (generated with BlenSor [1]).

time between the sensor and an object that is being measured (see figure 2). This time ∆t, however, is directly linked, i.e. proportional, to distance d (assuming that the speed of light c is constant) with the formula d . By deflecting the emitted light pulses into different directions with a scanning mechanism, the LiDAR is able to generate detailed three-dimensional depth maps. High distance resolution paired with angular information, i.e. the measurement direction, is what makes LiDARs essential for self-driving cars.

Even though almost all experts in the field of autonomous driving agree that LiDAR sensors are essential for reliable environmental perception, one has to pay good attention in order to spot one on our roads. In fact, there is only one type of LiDAR sensor, the ScaLa ([2] and [3]) in higher class Audi cars, that has been integrated into consumer vehicles up until now.

Why is this? Given the excitement in the automotive LiDAR industry caused by huge expectations and market evaluations (predicted automotive LiDAR market revenues in 2032: ∼ 18. billion dollars [4]).

Classical scanning

Today, we are used to LiDARs that utilize rotating parts (e.g. spinning mirrors, figure 3a, or spinning sensors, figure 3b) to cover a wide FOV (Field of View). Both optical paths of the LiDAR, emitter and receiver, are mechanically scanned. Either with the help of a rotating mirror or simply by rotating the device itself. This is what is often referred to as “classical scanning”. These sensors are great for the research and development phase of self-driving cars, but

(a) Scanning with a mirror. (b) Scanning by rotating the whole sensor.

Fig. 3: Classical scanning mechanisms in use today (rotation indicated by the arrows).

will not make it into future mass-produced cars since rotating parts in sensors are not only expensive but also too bulky and unreliable.

Hence, every serious LiDAR manufacturer aims at delivering a low-cost, solid-state (that is without any rotating parts) sensor right now. There are, however, major challenges to overcome. First, the initial costs for highly integrated solutions are huge, not to talk about the risk. Second, there is the technological question of how to cover a wide FOV without any rotating parts. It is not trivial to maintain the performance in terms of angular resolution and FOV coverage coming from classical scanning sensors, but at the same time abandon every part that moves.

In the following we will have a look at a few different designs for solid-state LiDARs by first looking at a receiver concept, followed by different emitter concepts.

Solid state sensors

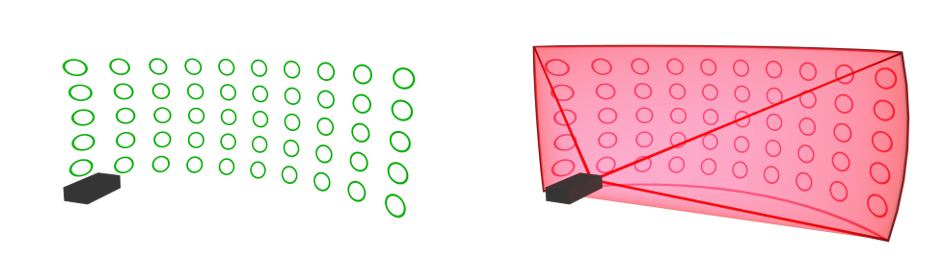

For a solid-state LiDAR there is almost no alternative to a focal plane receiver, i.e. an array of detector pixels (figure 4a), that covers a given FOV. A focal plane detector array works similar to the CCD (Charge-Coupled Device) chip of a camera in the sense that it passively detects light from different spots in the FOV.

On the emitter side one has the possibility to distribute the emitted optical energy into different regions of the receivers FOV and thus enhance the signal quality in the illuminated region at the cost of a scanning mechanism.

(a) Focal plane receiver . (b) Flash LiDAR.

Fig. 4: Solid state receiver and flashing emitter.

FLASH LiDAR

One way to cover a wide FOV without rotating parts is to simply illuminate the whole scene at once and omit a scanning mechanism, see figure 4b. This LiDAR design is called aʻflash LiDARʼ and floods the scene with a single optical pulse. The drawback is usually short range, since a single pulse has to provide enough optical energy for distance measurements of each individual detector. However, the maximum energy one is allowed to emit is limited by eye safety regulations. On the positive side, very high frame rates, given all data can be processed, are possible.

MEMS Mirror

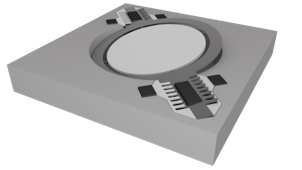

- One dimensional scanning MEMS mirror. Possible scan pattern of MEMS mirror.

Fig. 5: Scanning with a MEMS mirror (arrow and traces illustrate the oscillation).

A MEMS mirror (figure 5a) is a tiny mirror that oscillates in resonance. Even though it’s moving, it is still considered solid state in the automotive industry due to its high stability, i.e. resistance against vibrations. One can either scan a single emitter whose spot profile is stretched for example to a line, figure 5b left, or a stack of emitters, figure 5b right. Two dimensional scanning MEMS mirrors with a single emitter beam are also possible. However, these are more challenging to build, operate and utilize.

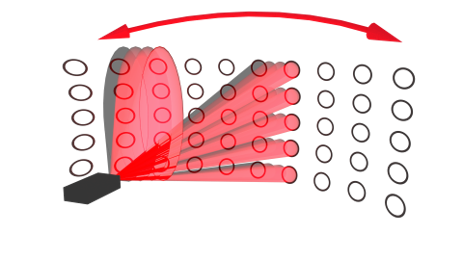

Optical phased array

An optical phase array’ basically works like a RaDAR antenna, but in the optical domain. It Is a two dimensional array of wave guides where each guide is able to adjust the phase of the light it emits, see figure 6. In this way it is possible to constructively interfere the light of each wave guide in such a way, that the resulting wave, i.e. the emitted pulse, will be directed into a certain angle. This scanning mechanism is promising but its realization in

Fig. 6: Scanning with an optical phased array.

hardware is very challenging. Itʼll probably take a few more years before we can see well performing sensors with optical phased arrays on the road.

Sequential flashing

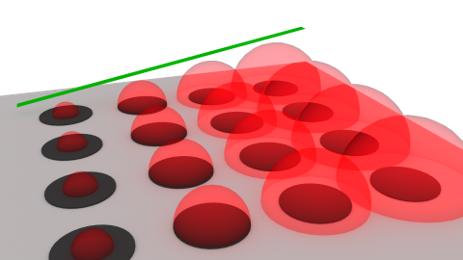

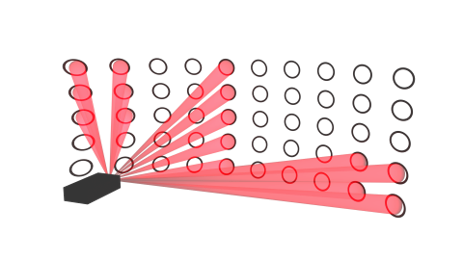

At Ibeo we follow the approach of ʻsequential flashingʼ, figure 7. That is having an array of emitters but instead of illuminating every detector pixel at once we rather illuminate small parts of the FOV and iterative stitch the whole frame together. In this way it is possible to avoid the drawbacks of a flash LiDAR and challenging im-

Fig. 7: Sequential flashing with a solid-state LiDAR (three consecutive patterns).

plementation of MEMS mirrors but still have a solid-state scanning mechanism. Additionally, it is possible to illuminate different regions of the FOV, e.g. first the upper left square, then the line in the middle, followed by the square in the lower right (figure 7).

All these four and other approaches are investigated and pursued by various companies at the moment. It remains to be seen what kind of solid-state LiDAR approach is going to prove itself superior to the others, and for what kind of uses. An exciting race, that Iʼm excited to be part of.

Authors Hanno Holzhüter

& Michael Kiehn Ibeo Automotive Systems

References

- [1] Michael Gschwandtner, Roland Kwitt, Andreas Uhl, and Wolfgang Pree. Blensor: Blender sensor simula- tion toolbox. In George Bebis, Richard Boyle, Bahram Parvin, Darko Koracin, Song Wang, Kim Kyungnam, Bedrich Benes, Kenneth Moreland, Christoph Borst, Stephen DiVerdi, Chiang Yi-Jen, and Jiang Ming, edi- tors, Advances in Visual Computing, pages 199–208, Berlin, Heidelberg, 2011. Springer Berlin Heidelberg. ISBN 978-3-642-24031-7.

- [2] Audi AG. Scan to me. https://audi-encounter.com/de/Laserscanner, 2017. accessed: 2019-03-10.

- [3] Vision Mobility. Das Auge des Autos. https://www.vision-mobility.de/de/magazin/fachartikel/ konnektivitaet-laserscanner-das-auge-des-autos-automatisierung-1124.html, 2018. ac- cessed: 2019-03-10.

- [4] Woodside Capital Partners in Collaboration with Yole. The Automotive LiDAR Market. http: //www.woodsidecap.com/wcp-proudly-releases-the-automotive-lidar-market-report- in-collaboration-with-yole-developpement/, 2018. accessed: 2019-03-12.